Do you need a custom benchmark?

Selecting an enterprise Generative AI model based on a public leaderboard score frequently leads to deployment failures. Artificial intelligence engineering teams are currently wasting millions of dollars trying to force highly ranked foundational models into proprietary systems. They frequently watch these systems hallucinate basic facts or regress in performance when finally exposed to real business data. While public AI benchmarks are structurally compromised by test contamination and general-knowledge bias, building a massive custom test from scratch on day one is an equally dangerous waste of resources. Overbuilding an evaluation set risks severe data overfitting before your system ever faces real users. Success requires a pragmatic maturity framework scaling from a handful of manual tests to an automated continuous evaluation pipeline.

TL;DR:

Public performance leaderboards suffer from test-set contamination and frequently fail to predict generalized capability across enterprise deployments.

Enterprise knowledge bases require dynamic evaluation firmly grounded in specific information snapshots to measure retrieval performance accurately.

Building massive bespoke test sets during early proof-of-concept stages wastes engineering budget and increases the risk of overfitting an untested concept.

Production readiness requires transitioning from manual grading to nested systems using algorithmic judges firmly validated against human red-teaming.

The illusion of generalized benchmark supremacy

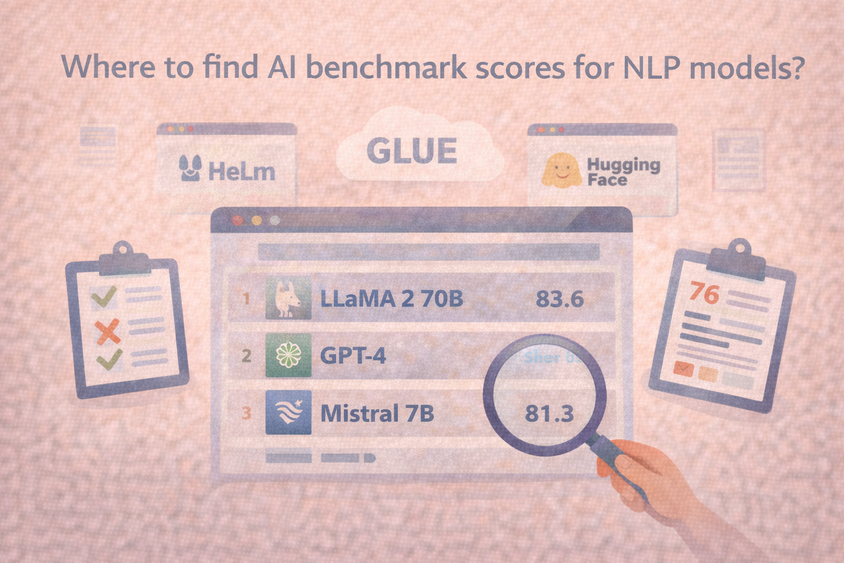

A model achieving a 90 percent accuracy rating on a generalized public test gives a dangerous illusion of competence. Test-set contamination quickly renders public AI benchmarks obsolete, according to recent technical research from LiveBench authors. Foundational models train on immense swaths of the open internet. That relentless data scraping inevitably captures the specific questions, options, and answers for almost every major public evaluation framework.

When facing these standard evaluations, algorithms recall memorized internet data while struggling to exercise genuine reasoning capabilities. ChatGPT and GPT-4 demonstrated high exact-match rates when asked to simply guess obscured benchmark options in tests detailed at NAACL 2024. The models already knew the answers hidden behind the masked portions of the test. High scores on contaminated tests offer little visibility into how a complex model will behave when processing private corporate documents it has never seen before.

Generalized tests often fail to predict performance in bounded workflows. NIST 800-3 guidelines mandate that benchmark accuracy on a fixed test set is distinctly different from generalized accuracy across related real-world tasks. Measuring true operational capabilities requires explicit statistical models to account for the inherent randomness underlying large language models. The static nature of a generalized test simply masks that randomness. Finding a true baseline requires understanding why most common evaluation frameworks miss the mark when dealing with subjective business intelligence.

Why enterprise workflows break standard AI evaluations

An engineering lead selects a newly released model purely because it scored perfectly on a standardized multiple-choice coding test. The engineering team wires the model into an internal human resources application designed to assist employees with complex benefit questions. Within two days of testing, the system confidently hallucinates a dental plan that the company dropped three years ago. Standardized tests assume static environments with unchanging inputs and highly objective answers. Corporate knowledge bases evolve constantly as employees rewrite internal guides and adjust strict compliance policies.

Information retrieval architecture demands evaluation datasets heavily grounded in specific organizational facts. WixQA researchers demonstrated that enterprise end-to-end applications require testing against a distinct knowledge base snapshot and abandon generic open-domain trivia. Domain specificity fundamentally alters how foundational models function and how they compare against each other. Findings published at NAACL 2025 indicate that language algorithms show materially different performance trends across 27 tested datasets and four distinct expert domains when compared to general knowledge benchmarks.

Operating multi-agent systems introduces cascading complexities across sequential automation steps. Authors of an Enterprise Agentic Benchmark Preprint found that traditional performance rankings poorly predict practical autonomous performance in business environments. Newer generations of frontier models frequently fail to consistently beat older iterations on these concrete, multi-stage departmental workflows. Engineering groups uncover why LLM deployments fail in production by strictly aligning their test prompts directly to the daily operational reality of the target team.

The ROI trap of early-stage custom benchmarks

Recognizing that public leaderboards lack direct business relevance pushes many data science leads toward the opposite extreme. Teams naturally conclude they need to immediately collect thousands of proprietary workflow examples to create a strong defense against hallucinations. Trying to build a custom benchmark of massive scale before proving basic model viability burns critical engineering hours and significantly delays project launches.

Thorough evaluation pipelines require sophisticated annotation capabilities and heavy statistical rigor. Authors of an Enterprise LLM Evaluation Benchmark Preprint document that highly customized evaluation setups carry severe business risks related to deployment cost and slower setup times. Gathering a massive dataset during a proof-of-concept phase creates incredibly rigid boundaries around an application that has not yet faced unpredictable user interactions.

Demanding massive evaluation datasets upfront forces project leads to lock in prompt designs and architectural choices based on premature assumptions. Early testing easily falls victim to overfitting the evaluation pipeline to tiny, unrepresentative edge cases just to satisfy a completion metric on an internal spreadsheet. Successful scaling preserves budget by introducing complexity only as the generative application matures toward public availability.

The custom benchmark maturity framework

Protecting your deployment budget requires scaling your evaluation environment in distinct operational phases. Jumping straight into automated judging loops skips the critical requirement of defining what constitutes an accurate answer for your specific industry. Engineering teams mature their testing protocols alongside the underlying software architecture.

Phase 1: The proof-of-concept baseline

Early viability testing focuses purely on filtering out unadaptable models before significant financial investment occurs. Overbuilding extensive private tests immediately is rarely worth the human capital or time expenditure. A highly representative set of 20 to 50 tasks forms a sufficient starting point for evaluating an early-phase concept.

These carefully selected examples allow prototype developers to compare model capabilities manually and verify basic logic. Product teams track small baseline sets across the stages of benchmark utility as experimental prototypes progress smoothly into internal pilot programs.

Reviewing a highly restricted pool of examples yields immediate visibility regarding basic cost and accuracy trade-offs. Internal testing on a 20-task custom set revealed that GPT-5 scored a 0.89 accuracy rating, while GPT-5-mini scored 0.87. A microscopic accuracy drop frequently justifies using the significantly cheaper miniature model for the bulk of high-volume internal requests.

Phase 2: Domain-specific human baselines

Subjective domain expertise demands a thoroughly documented human standard before automated continuous evaluation is statistically viable. Using generalized foundational models to judge complex specialized terminology without first measuring human consensus generates falsely confident system metrics. High failure rates during this phase primarily point to fundamentally misaligned prompting strategies.

Nuanced corporate workflows regularly challenge top human professionals alongside artificial intelligence systems. During a published test evaluating real-world legal frameworks, authorized reviewers were reliably accurate across just 56.7 percent of standard contract drafting tasks. The top human lawyer achieved a 70 percent success baseline, which was closely contested by the leading AI tool reaching 73.3 percent.

Organizations assessing highly specialized environments require platforms equipped for complex taxonomy configurations. HumanSignal offers the required data annotation environment to establish strict interrater agreement across multiple human experts. Securing an objective mathematical baseline of human performance creates the solid foundation necessary for trusting eventual automated scoring loops.

Phase 3: Nested evaluation and automated judging

High-volume production environments demand oversight strategies moving vastly faster than human review panels can manually read. Deep system assessment requires nesting multiple methodologies far beyond simple scripted pass or fail checks. Incorporating organic human red-teaming alongside rigid algorithmic boundary checks consistently catches subtle logical failures that code validation alone misses.

Scaling operations frequently requires deploying secondary language models acting as algorithmic judges to grade the literal outputs of the primary application model. NIST guidelines state that development teams strictly validate these judging setups via rubric quality measurements, direct comparisons with human grading standards, and varied interrater agreement protocols.

Transitioning gracefully from manual grading spreadsheets to a fully nested algorithmic judging pipeline introduces serious technical overhead for a small engineering squad. HumanSignal provides the scalable evaluation infrastructure required to run automated judging loops and aggressively track continuous alignment with original human baselines over time.

Moving from static tests to continuous assessment

A reliable framework operates continuously within a deployment environment to track ongoing model performance. Relying purely on generalized public assessment ignores the highly specific vocabulary and rigid compliance rules defining your daily reality. Assuming a bespoke dataset remains fully applicable after a full year of operation ignores the rapidly shifting inputs constantly entering your broader software ecosystem.

Safeguarding enterprise intelligence tools requires constantly updating prompts and rapidly adjusting ground truth standards as internal wikis evolve. HumanSignal gives specialized teams the dedicated operational infrastructure needed to scale a continuous validation ecosystem without pulling senior software engineers off critical product features.

Business leaders maintain visibility by tracking model cost versus performance over time to ensure initial architectural choices remain financially viable at scale. Platform operators retain the granular flexibility necessary for customizing specific agreement logic among their external experts. If you do not perform rigorous continuous evaluation of your generative applications internally, you are asking your uncompensated customers to do the beta testing in production.

What is a custom benchmark in machine learning?

A proprietary evaluation dataset paired with a scoring methodology designed specifically for a single organization. It strictly measures how well an artificial intelligence system executes the specific operational workflow it will face in production. Setting tailored datasets apart from public leaderboards ensures the evaluation directly matches the dynamic reality of private organizational environments.

How much does it cost to build a custom AI benchmark?

Financial requirements scale proportionately to the technical complexity of individual operational domains. Reviewing basic customer service chat logs requires minimal budget allocations using unspecialized crowdsourced data labelers. Hiring highly compensated subject matter experts like registered clinicians or licensed attorneys to carefully review technical model outputs creates a vastly higher processing cost per task.

Why is data contamination a problem for AI benchmarks?

Open foundational models scrape immense portions of the open internet aggressively during their primary training cycles. Unfiltered training datasets regularly absorb the literal questions and corresponding answers used in popular public evaluation sets. Impacted models appear highly intelligent because they accidentally memorized the test key, rendering their final score statistically useless for measuring true deductive reasoning.

Can automated judges fully replace human annotators?

Algorithmic judges offer incredible operational speed and necessary scale for launched applications once they undergo rigorous initial baseline validation. Organizations thoroughly test their automated judge against human interrater scoring loops and formally measure rubric quality before trusting any final automated results. Relying solely on algorithmic grading without grounding the system in human validation frequently reinforces unhelpful statistical biases.

How frequently should a custom benchmark be updated?

Deployment teams routinely alter core evaluation tasks as underlying corporate knowledge expands or shifting user intent alters daily application usage. Static baseline directories suffer from rapid metric saturation and fail to reflect the deeply dynamic mechanics of modern retrieval environments. An effective assessment methodology serves as a strongly versioned and living representation of your active software ecosystem.